22 Spatial Cross-Validation

22.1 Cross-validation

22.1.1 Why?

Cross-validation can be used for evaluating an estimator performance and to compare different estimators.

Cross-validation may help choosing a kriging method (e.g. the type of kriging), a variogram model (e.g. spherical, exponential, or other configurations about the nugget, sill and range parameters) or a neighbourhood.

22.1.2 What is it?

Its principle is the following: at each point location, remove the sample value and estimate its value from the other samples. Then, compare actual \(z({\mathbf u})\) to estimated \(\hat{z}({\mathbf u})\) values using statistics on the errors \(\epsilon_{\beta}\):

\(\epsilon_{\beta} = z_{\beta}({\mathbf u}) - \hat{z}_{\beta}({\mathbf u})\,,\;\; \forall\; \beta=1,...,n_{VAL}\)

where \(n_{VAL}\) is the number of known data included in the validation subset.

Once a model is built (or trained or calibrated, according to its type) it can be used to make predictions. Usually, the interest of the modeler is to estimate a value for the attribute at hand at an unvisited geospatial location.

22.1.2.1 What about the accuracy of the prediction?

The only way to acknowledge about the accuracy is to make a new measurement in the point location where the prediction is made. This operation isn’t always possible for cost or technical constraints (e.g. we measured a dynamic variable for which we cannot get currently a measurement back at the time of the monitoring or survey). Therefore, it is possible to use some samples from the data at hand for validation. To make it properly works, an independent subset (called validation subset) must be created and used only for the purpose of measuring the model performance (and never participating to the training).

One can get useful information about the fitting capabilities of the model, including

- overfitting (model too complex which badly fits other data than training),

- underfitting (model too simple and unable to find relationships and patterns),

- underestimation (the model consistently predicts lower values than the actual values), and

- overestimation (the model consistently predicts higher values than the actual values).

22.2 Split the data to create subsets

The operation of splitting data into subsets can be usefull to destinate each subset to a distinct scope in the procedure of model building and validation. It is very common to split the whole dataset into at least two subsets:

- Training subset. The training subset is used to build / calibrate the model and generally it contains 60%-90% of the whole data. It means that only the information available in the training subset is actually used either to make the interpolation at unknown locations (during validation the unknown locations are the validation locations) or to recognize the parameters of the model (e.g. think to the parameters of the model variogram).

- Validation subset. The validation subset is used to validate the model by measuring the error of the estimation on 10%-40% of the data not used for training / calibration. Any error measure takes into consideration the difference of the measured vs predicted value. It means that any record belonging to the validation subset can be used (or “seen”) by the model during calibration.

22.2.2 Import data

tmean <- read.csv("XY_Tmean.csv")Dimension:

dim(tmean)

#> [1] 493 3

n <- dim(tmean)[1]

n

#> [1] 49322.2.3 Transform into simple feature

summary(tmean_sf)

#> Tmean geometry

#> Min. : 0.3347 POINT :493

#> 1st Qu.: 1.4522 epsg:32633 : 0

#> Median : 3.2497 +proj=utm ...: 0

#> Mean : 3.5548

#> 3rd Qu.: 5.0767

#> Max. :12.6371Map of points

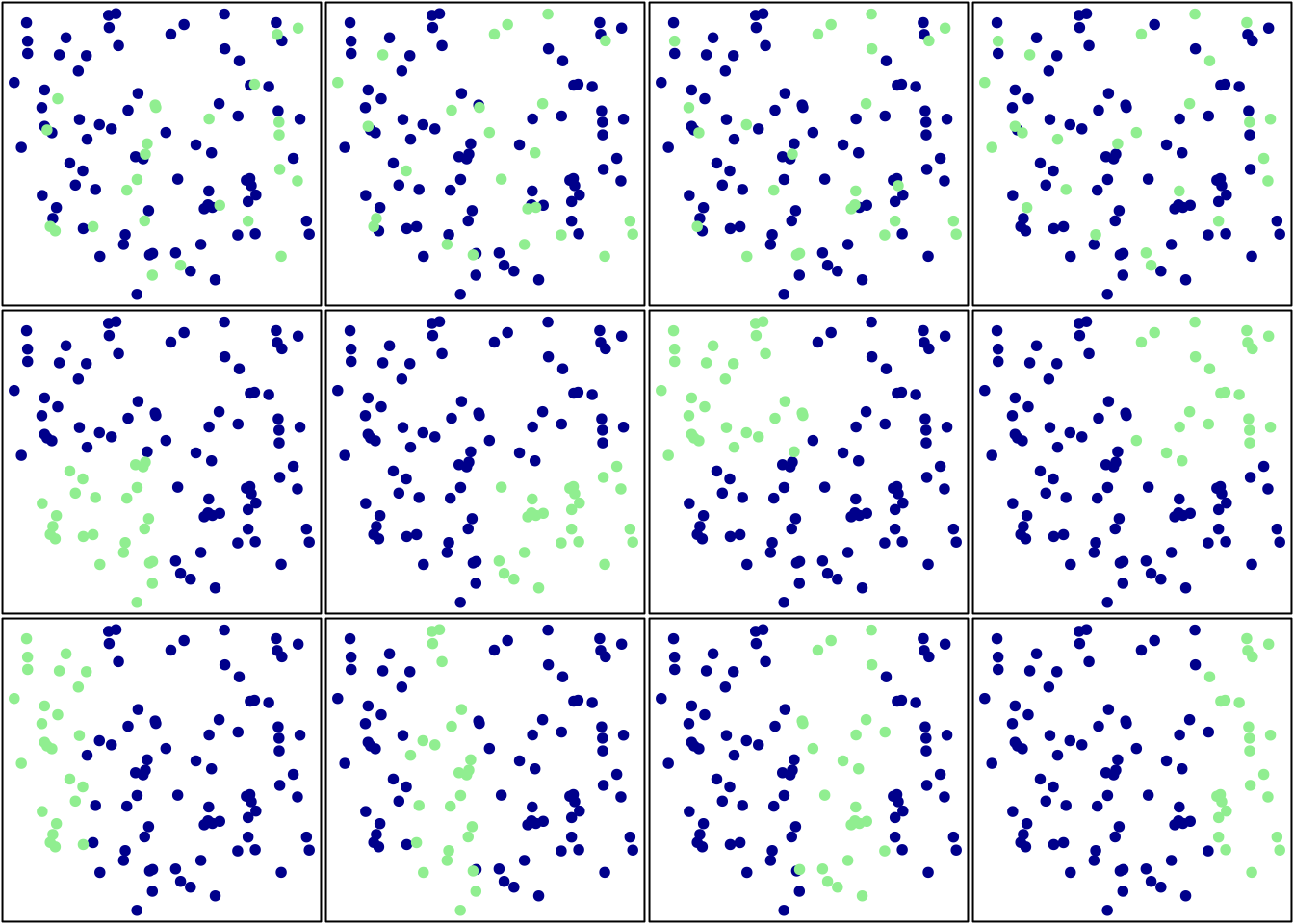

22.2.4 Validation subset

The definition of a random subset for validation purpose is crucial to evaluate the accuracy (or the error) of any interpolation technique (e.g. nearest neighbours, IDW, kriging, etc.).

The validation subset is made by more than few records (let’s say from 10% to 40% of the whole dataset) in order to get a good estimate of the error associated with the spatial interpolation.

In the following example, we can use 20% of the whole data to create the validation subset:

n_val <- n * 20 / 100

n_val

#> [1] 98.6Let’s round to the nearest integer, since we cannot select a fraction of a single record:

n_val <- round(n * 20 / 100,0)

n_val

#> [1] 99

val_idx <- sample(1:n, n_val)

val_idx

#> [1] 92 277 107 28 330 312 440 138 98 318 3 225 406 267

#> [15] 379 154 163 488 463 281 320 310 237 118 130 178 36 414

#> [29] 10 186 132 411 64 465 201 12 315 443 245 53 71 69

#> [43] 128 254 367 356 371 303 301 266 296 198 438 15 212 169

#> [57] 459 63 160 155 232 305 48 13 403 32 103 257 416 349

#> [71] 456 81 185 473 493 424 344 256 304 211 176 485 481 209

#> [85] 269 226 165 208 354 33 343 188 351 142 84 396 124 88

#> [99] 468Split into validation:

tm_val <- tmean_sf[val_idx,]

dim(tm_val)

#> [1] 99 222.2.5 Training subset

The residual subset remaining after the removal of the validation subset forms the training subset.

tm_tr <- tmean_sf[-val_idx,]

dim(tm_tr)

#> [1] 394 222.3 Seed

One can set the seed in the random number generator in order to get always the same list of random indexes. This can be useful in the case in which it is desirable to repeat the experiment in the same conditions.

set.seed(3008)

val_idx <- sample(1:n, n_val)

val_idx

#> [1] 388 385 387 462 436 381 116 146 448 204 43 49 459 325

#> [15] 453 166 460 125 468 226 12 232 243 304 67 423 256 11

#> [29] 111 45 425 162 149 221 474 276 246 118 75 97 316 212

#> [43] 193 300 74 330 234 433 223 318 15 441 168 473 173 137

#> [57] 294 250 353 307 384 249 184 27 217 485 247 261 337 20

#> [71] 229 406 415 144 117 192 39 366 2 421 10 390 358 44

#> [85] 467 338 163 4 109 491 225 251 449 38 335 153 55 450

#> [99] 478The seed definition helps to implement reproducibility and brings the randomness in the geospatial interpolation algorithms (e.g. Machine Learning algorithms) for using random numbers.

Random numbers are of two types: pseudo-random numbers and true random numbers. Pseudorandom numbers are numbers that appears to be random, but they are not truly random.

Typically, pseudorandom numbers (see Wikipedia > Pseudorandom number generator) can be generated using a seed value (which is provided by a user with the set.seed() function) which is then passed to an algorithm that uses the value to generate a new number.

22.4 Monte Carlo analysis

The error calculation is dependent of the particular subset selected by the random number generator, hence it should be convenient to repeat several times the splitting procedure with a different set of random number in each. One can calculate the error for each repetition and then calculate the average error. This procedure is called Monte Carlo analysis.

The Monte Carlo analysis (see Wikipedia > Monte Carlo method) follows this pattern:

- Define a domain of possible inputs (select the dataset to be used for spatial interpolation)

- Generate inputs randomly from a probability distribution over the domain (split the whole dateset in training and validation using the random number generator)

- Perform a deterministic computation of the outputs (calibrate the model using the training subset and predict with it at validation locations)

- Aggregate the results (calculate the error for each Monte Carlo repetition and compute the aggregated average error)

22.5 Error definition (optional)

We now define a custom function to calculate the root mean square error. Of course, there are different packages in R already providing the RMSE calculation (please, search for them by yourself). Here we use a custom RMSE function as an example and just to underscore a viable opportunity to set up any kind of custom error measurement.

22.5.1 Version 1

rmse_v1 <- function(M,P){

# INPUTS

# M : measurements

# P : predictions

n <- length(M)

E = (M - P)

S = E^2

M = sum(S) / n

R = sqrt(M)

R

}

rmse_v1( tm_val$Tmean , tm_val$Tmean )

#> [1] 0

rmse_v1( tm_val$Tmean , tm_val$Tmean[1:98] )

#> Warning in M - P: longer object length is not a multiple of

#> shorter object length

#> [1] 0.7167822.5.2 Version 2

rmse_v2 <- function(M,P){

# INPUTS

# M : measurements

# P : predictions

# Checek that M & P have the same size:

n1 <- length(M)

n2 <- length(P)

if(n1>n2 | n1<n2){

print("Input vectors have different size!")

return(NA)

}

n <- length(M)

E = (M - P)

S = E^2

M = sum(S) / n

R = sqrt(M)

R

}Now we can manage different lengths about actual and predicted values:

rmse_v2( tm_val$Tmean , tm_val$Tmean[1:98] )

#> [1] "Input vectors have different size!"

#> [1] NAAnyway, the second version of the RMSE function cannot handle the situation in which missing values are generated by the estimator, as an NA is returned:

# insert a dummy NA in the data:

actual = tm_val$Tmean

predicted = tm_val$Tmean

predicted[1] <- NA

rmse_v2( actual , predicted )

#> [1] NAIndeed, it may happen that the estimator returns NA in neighborhoods where an insufficient number of observations are available for interpolation (e.g. below the nmin parameter).

22.5.3 Version 3

The following third version of the RMSE function is created to overcome the previous situation:

rmse_v3 <- function(M,P){

# INPUTS

# M : measurements

# P : predictions

# Checek that M & P have the same size:

n1 <- length(M)

n2 <- length(P)

if(n1>n2 | n1<n2){

print("Input vectors have different size!")

return(NA)

}

n <- length(M)

E = (M - P)

S = E^2

M = sum(S, na.rm=TRUE) / n

R = sqrt(M)

R

}Indeed, the RMSE is correctly computed:

# insert a dummy NA in the data:

actual = tm_val$Tmean

predicted = tm_val$Tmean

predicted[1] <- NA

rmse_v3( actual , predicted )

#> [1] 0Do not forget that the result of the previous RMSE calculations were always zero, since we are comparing the measurements with themselves.

22.6 Assignment

Use the training and validation subsets to build and then validate the models of interpolation:

- NULL (global average)

- Nearest neighbour (proximity polygon)

- Nearest neighbours (local average)

- Inverse Distance Weighting (IDW)

- Kriging, using two different variogram models

Measure the performance by each model on the validation subset using the custom RMSE function above.

Finally, compare models and select the most performing one (the model having the lowest error).